Motivation

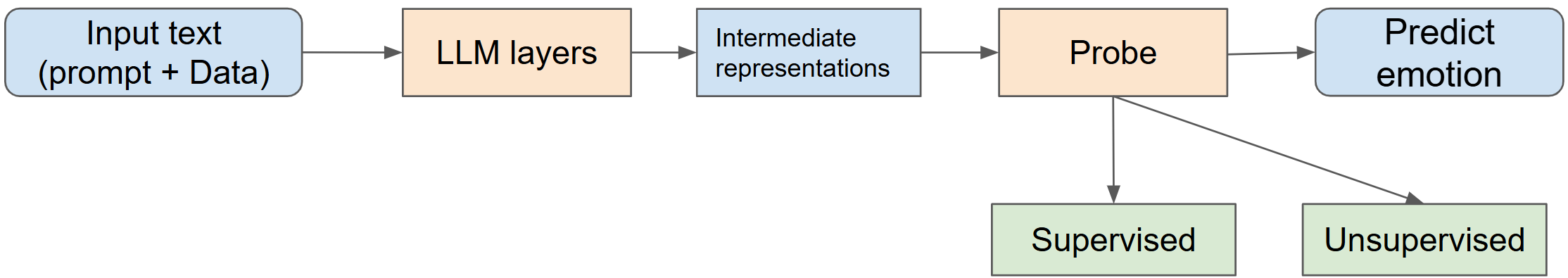

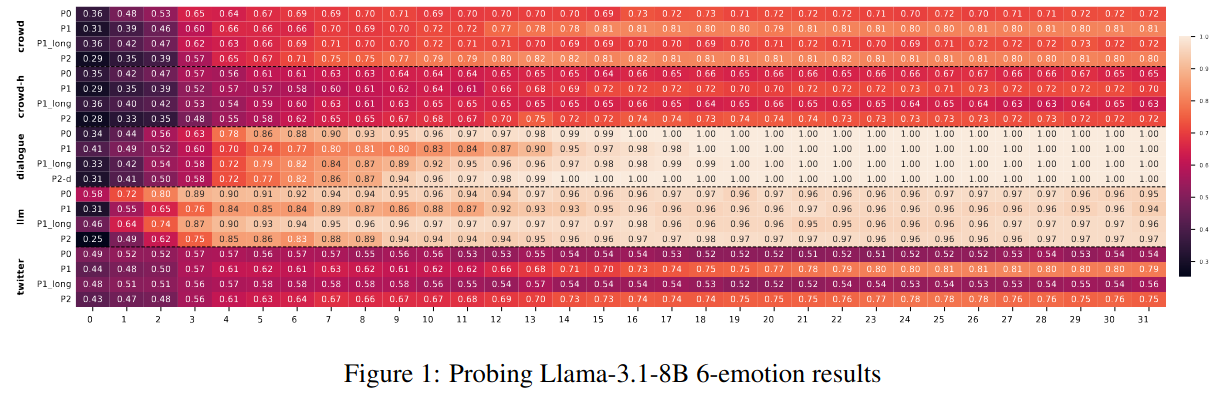

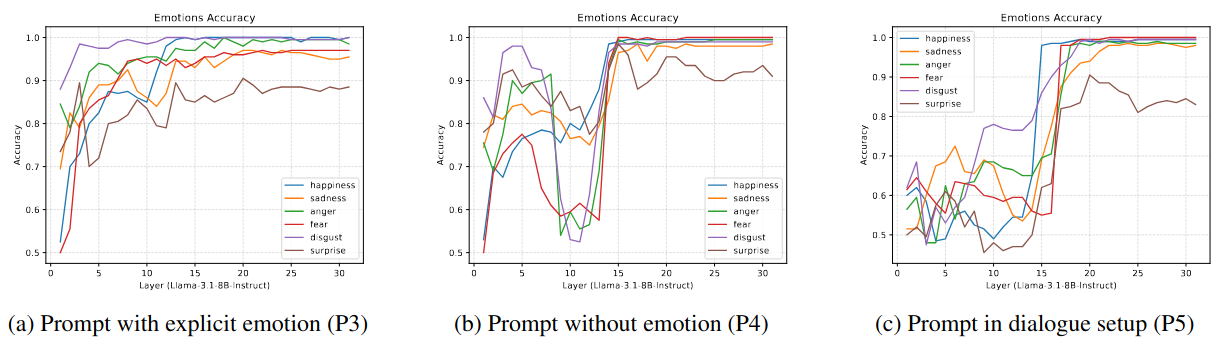

Although several works have used interpretability techniques to understand emotion identification capabilities of LLMs, there are contradictions in the findings regarding the layers early / middle where emotion representation exists. We opine that these contradictions arise from the use of different prompts and datasets with different difficulty levels. Our intent is to analyze the setup using prompts with varying expressivity across datasets of different difficulty levels, to gain an overall understanding of LLMs' ability to identify emotions at the representation level. We then assess the reasoning ability of such models with an emotion comprehension task.

Key Findings

1) Using probing techniques, we show that layers with emotion representation depend on the instruction prompt and the clarity with which emotion is expressed in the input data.

2) There exist intrinsic emotion reading vectors that are similar across datasets (in later layers) and can be used interchangeably, revealing their foundational nature.

3) LLMs' performance on emotion reasoning tasks remains poor. We observe that CoT mostly generates reasoning traces to increase it confidence in its original answer, especially when the model is confident in it.

4) This motivates the need for methods that leverage implicit emotion representations to improve LLMs' explicit reasoning capabilities.